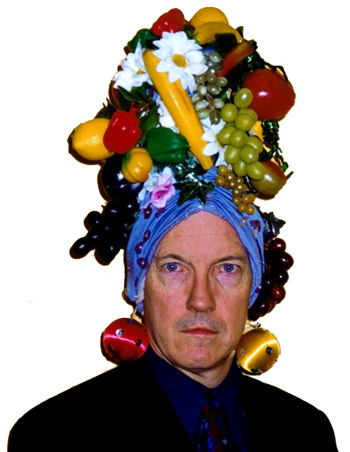

VIDEO TRANSCRIPT: Hello, this is Matt Asher from StatisticsBlog.com. I’m going to be reviewing Mathematica 9, from Wolfram Research. In particular, I’ll be focusing on using it with R and to do Monte Carlo simulations and other statistical work. You can find a full transcript of this video at my blog, including the source code and links to all of the webpages I mention.

Before I begin I’d like to thank Jeff Hara and Andy Ross from Wolfram for their time. Also thanks to the folks at the Mathematica Stack Exchange, who helped with a couple of my questions.

I’m going to get started with a blank notebook. I’m going to clear out all of the variables that exist. I’ve found sometimes that if you have existing variables that can cause problems.

ClearAll["Global`*"]

After each line I’m hitting Shift+Enter to run the command, if you just hit enter Mathematica won’t run things yet.

So I’ve cleared my variables and I’m going to run

Needs["RLink`"]

which will bring in the link between Mathematica and R.

InstallR[]

I’m going to make sure it’s installed.

REvaluate["R.Version()"]

And then I’m going to run a test command here to make sure everything is up and running. As you can see this is the version of R I’m running and the connection succeeded.

Note that the free version of Mathematica, the evaluation version, doesn’t come with support for R, so if you want to test out Mathematica’s and its interactions with R you either have to have to buy the full version or maybe if you call up or contact Wolfram they’d be willing to let you have a free evaluation version that is full and allows you to test out R.

So how does the interface between R and Mathematica work?

Basically, you can run operations in R, then store the results to variables in R. You can also pass data types back and forth between R and Mathematica.

Here I’m setting a variable and this variable is set in R, not in Mathematica

RSet["hal", 9000]

So if I were to type just

hal

There is no response back. This is Mathematica’s way of saying that the variable is undefined or that it doesn’t know what to do with your input. So to get back information from R we have to use:

REvaluate["hal"]

We are putting “hal” in quotes so we are parsing this in R and not in Mathematica.

For example we can do things like grab a dataset from R

iris = REvaluate["iris"]

I’m grabbing the famous “iris” dataset in R and I am pulling it into Mathematica.

or I could do things like evaluate a command in R:

REvaluate["det(matrix(sample(-50:49),nrow=10))"]

and bring back the results. This grabs the determinant of a random matrix.

We can even do things like create our own functions in R, and this gets put into a variable in Mathematica.

perfectSample = RFunction["function(n, dist, ...) match.fun(paste('q', dist, sep=''))((1:n) / (n+1), ...)"]

This function creates a perfect sample of the length that you specify of a particular distribution. Then we can call that function directly in Mathematica.

perfectSample[100, "pois", 10]

and the results are returned.

Of course, if we just wanted to do things in R, we would be continuing to just use R, instead of having to learn this new interface between R and Mathematica. So then what can we do in Mathematica that goes beyond what we can easily do in R?

One of the biggest advantages to using Mathematica is that you get access to tools for creating interactive plots and simulations that can be changed on the fly.

I’m going to do an example using the Benini Distribution, which, according to Wolfram’s web page, can be used to model the weight of cats.

So to do that, what I’m going to do is use the Mathematica command “Manipulate”

Manipulate[Block[{data, dist, kmd},

data = RandomVariate[dist = BeniniDistribution[\[Alpha],\[Beta], \[Sigma]], n];

kmd = KernelMixtureDistribution[data, h, MaxMixtureKernels -> All];

Plot[{PDF[dist, x], PDF[kmd, x]}, {x, 0, xRng},

PlotRange -> Automatic]], {{\[Alpha], 1}, .01, 5}, {{\[Beta], 1}, 0.01,

5}, {{\[Sigma], 1}, .01, 2}, {{n, 100}, {10, 100, 1000, 10000}}, {{xRng, 5}, 1,

10}, {{h, .5}, 0.01, 1}]

And then I get back the results and what I’ve got here is a live Monte Carlo simulation where I am specifying the different parameters of the distribution and I’m also specifying how many variates that I’m creating. This is the smoothing, the kernel bandwidth that I am adjusting.

And I can adjust the size of it here. Make it bigger. And do all of these adjustments on the fly.

As you can see, you’ve got some good power here for generating interactive plots and simulations. You can do these natively in Mathematica, or you do live manipulation of results coming from R. This example comes from the Mathematica guys:

mathematicaRPlotWrapper = RFunction["function(filename, plotfun){

pdf(filename)

plotfun()

dev.off()

}"];

Clear[getRPlot];

getRPlot[plotFun_RFunction] :=

With[{tempfile = FileNameJoin[{$TemporaryDirectory, "temp.pdf"}]},

If[FileExistsQ[tempfile], DeleteFile[tempfile]];

mathematicaRPlotWrapper[tempfile, plotFun];

If[! FileExistsQ[tempfile], Return[$Failed]];

Import[tempfile]];

myFun[t_] :=

Show[#, ImageSize -> Medium, PlotRange -> All] &@

getRPlot[RFunction["function(){

x<- seq(1," <> ToString@t <> ",0.1)

y<- sin(x)

plot(y~x)

}"]]

What's going to happen here is I am calling an R function, doing all of my calculations, bringing them back into Mathematica.

I forgot the "Manipulate" part:

Manipulate[myFun[t], {t, 2, 10}]

So here we go. And what's happening is everything is being sent to R for processing then coming all the way back to Mathematica. As you can see even though we are making that round trip the results are coming back at a good pace, it's almost instantaneous this particular example.

What about speed though, more generally?

I tried creating some random variates like I did in my examination of JavaScript versus R. So I'm going to create 10 million random variates from a Normal distribution

Timing[data = RandomVariate[NormalDistribution[], 10^7];]

and that takes about a quarter of a second, a little bit more, which is about twice as fast as R for generating those.

But then let's look at more of a worst-case scenario, a bunch of nested loops.

Timing[

l = 0;

For [i = 0, i < 10^2, i = i + 1,

For[j = 0, j < 10^2, j = j + 1,

For[k = 0, k < 10^2, k = k + 1,

l = l + 1

]

]

]

]

Three of them, a total of a million loops it's going through, and this takes about 1.2 seconds, and it will increase by an order of magnitude if I add an order of magnitude in here. That is slow, it's about twice as slow as the same code would take to run in R, a language not known for it's speed with loops. Of course, this is generally speaking not what you want to do if you want to run fast code. Mathematica itself on their website advises against using these kinds of procedural codes.

But at the same time I've found that there are times when there really is no other way to do things, especially if you are doing simulations with a bunch of objects that take place over time, that are iterative, in which case you do need a programming structure that is like this, so you'll have to keep this in mind in terms of the speed.

Beside the live graphical manipulation of the results, another key benefit to using Mathematica is that you can do direct probability calculations, and sometimes even find closed form solutions for combining random variables.

I've got some code here that determines the probability that one standard normal variable will be greater than another.

Probability[

Subscript[x, 1] > Subscript[x,

2], {Subscript[x, 1] \[Distributed] NormalDistribution[0, 1],

Subscript[x, 2] \[Distributed] NormalDistribution[0, 1]}]

And it is, of course, one-half. That's a simple example. We can do things that are more complicated. I'm going to look here for a more general solution when you have two Normal variables with means μ1 and μ2 and we're going to try and find the probability that one of them is greater than the other.

Probability[

Subscript[x, 1] > Subscript[x,

2], {Subscript[x, 1] \[Distributed]

NormalDistribution[Subscript[\[Mu], 1], 1],

Subscript[x, 2] \[Distributed]

NormalDistribution[Subscript[\[Mu], 2], 1]}]

As you can see it's taking more time to run this calculation. And eventually we get back the response. And we do have a closed form solution.

"Erfc" stands for the complementary error function.

Unfortunately, not all of the problems that I tried to do work and sometimes they just freeze up completely.

Probability[

Subscript[x, 3] > Subscript[x,

4], {Subscript[x, 3] \[Distributed] PoissonDistribution[5],

Subscript[x, 4] \[Distributed] PoissonDistribution[10]}]

Here I'm running a query to Mathematica to try and find the probability that a Poisson(5) will be greater than a Poission(10) random variable. I found that this just freezes up and will not return a response. Though I only waited a certain number of minutes. Actually, one time it locked my compter up entirely, the other time I gave up after a few minutes. I'm going to hit Alt-comma to abort the command and back out of that.

So, by comparison to R, you can do the same calculation of two Poissions. I'm going to make sure that's run in R:

x = rpois(10^6,5); y=rpois(10^6,10); z = x

(NOTE: This code actually finds the probability that a Poission(5) is LESS than a Poission(10))

As you can see I've run that a couple times already, and it takes about .9 seconds to run this. Of course, this is not trying to find a closed form solution, but for me anyway, these are perfectly good solutions, numerical solutions, to the problems.

So, going back in to Mathematica. Besides getting closed form solutions for probabilities, you can combine distributions, do convolutions and see what kind of formula you get back out.

I found this worked fairly well for simple, well-know distributions:

dist = TransformedDistribution[u + v,

{u \[Distributed] NormalDistribution[μ1, σ1],

v \[Distributed] NormalDistribution[μ2, σ2]}];

PDF[dist, x]

I do it here for the Normal. I'm adding two Normally distributed variables together and we get back out very quickly the formula for that.

But what happens if we try to work with a less well known distribution. In fact, lets go ahead and see what happens if we want to add together cats and find out the final weight distribution.

dist = TransformedDistribution[u + v,

{u \[Distributed] BeniniDistribution[\[Alpha]1,\[Beta]1,\[Sigma]1],

v \[Distributed] BeniniDistribution[\[Alpha]2, \[Beta]2,\[Sigma]2]}];

PDF[dist, x]

And, apparently, cats don't add so well. I tried this one as well a couple times and wasn't able to get results returned from Mathematica unfortunately.

So, besides these bugs and issues, there are a couple other significant downsides to Mathematica. Sharing results over the Internet can be done: you can export notebooks to HTML, but people if they want to use the interactive graphs they'll need to have a special browser plugin installed. I asked the guys from Wolfram if they know what percent of web users already have it installed. They didn't know, I suspect the number is very low, much lower than, say, the number who have Flash installed. Of course R by itself doesn't provide much support for sharing results over the web, though Rstudio makes something called Shiny that can do some exporting over the web. I haven't checked that out very much yet. I plan to do that soon.

So, beyond sharing, the biggest impediment to using Mathematica on a daily basis is the interface. The GUI. I'll bring in some of the buttons here, the palettes of buttons. Overall the look is extremely crude. Things are disordered. The floating palettes have buttons of various sizes different places, and it looks essentially like something you might have hacked together in Visual Basic 15 years ago and then hadn't been touched since. Clearly, the modern era of interface design has passed Mathematica by. At this point even open source programs that began with horrific interfaces, like the Gimp, have now begun to focus on making their interfaces look good. And looks may seem superficial, but if you are going to be working with an interface like this, if you are going to be paying $1000 for a license of Mathematica, I don't think it's too much to expect that the design be easy to use, that it be easy to find things, and that there are cues as to where to find things. Color would certainly go a long way to help with that, as would other cues to help you.

I'm going to bring back in Mathematica here.

Based on the GUI, I wonder... One more thing about the GUI, if you're moving the palettes along it doesn't do ghosting, so it pops back in.

So, despite these issues, I can see using Mathematica as an occasional tool to find exact results for probabilities and distributions, or quickly testing out how changing parameters affects a distribution's shape. For now though, I'm going to continue experimenting with JavaScript as an alternative to R that runs code quickly and also I'll be looking some more into Shiny.

Make sure to check out the links related to this video at StatisticsBlog.com, and if you like these videos click on the subscribe button.