Over the past couple weeks, I’ve been considering alternatives to R. I’d heard Python was much faster, so I translated a piece of R code with several nested loops into Python (it ran an order of magnitude faster). To find out more about Mathematica 9, I had an extended conversation with some representatives from Wolfram Research (Mathematica can run R code, I’ll post a detailed review soon). And I’ve been experimenting with JavaScript and HTML5’s “canvas” feature.

JavaScript may seem like an unlikely competitor for R, and in may ways it is. It has no repository of statistical analysis packages, doesn’t support vectorization, and requires the additional layer of a web browser to run. This last drawback, though, could be it’s killer feature. Once a piece of code is written in JavaScript, it can be instantly shared with anyone in the world directly on a web page. No additional software needed to install, no images to upload separately. And unlike Adobe’s (very slowly dying) Flash, the output renders perfectly on your smartphone. R has dozens of packages and hundreds of options for charts, but the interactivity of these is highly limited. JavaScript has fewer charting libraries, but it does have some which produce nice output.

Nice output? What matters is the content; the rest is just window dressing, right? Not so fast. Visually pleasing, interactive information display is more than window dressing, and it’s more in demand than ever. As statisticians have stepped up their game, consumers of data analysis have come to expect more from their graphics. In my experience, users spend more time looking at graphs that are pleasing, and get more out of charts with (useful) interactive elements. Beyond that, there’s a whole world of simulations which only provide insight if they are visual and interactive.

Pretty legs, but can she type?

Alright, so there are some advantages to using JavaScript when it comes to creating and sharing output, but what about speed? The last time I used JavaScript for a computationally intensive project, I was frustrated by its slow speed and browser (usually IE!) lockups. I’d heard, though, that improvements had been made, that a new “V8” engine made quick work of even the nastiest js code. Could it be true?

If there’s one thing I rely on R for, it’s creating random variables. To see if JavaScript could keep up on R’s home court, I ran the following code in R:

start = proc.time()[3]

x = rnorm(10^7,0,1)

end = proc.time()[3]

cat(start-end)

Time needed to create 10 million standard Normal variates in R? About half-a-second on my desktop computer. JavaScript has no native function to generate Normals, and while I know very little about how these are created in R, it seemed like cheating to use a simple inverse CDF method (I’ve heard bad things about these, especially when it comes to tails, can anyone confirm or deny?). After some googling, I found this function by Yu-Jie Lin for generating JS Normals via a “polar” method:

function normal_random(mean, variance) {

if (mean == undefined)

mean = 0.0;

if (variance == undefined)

variance = 1.0;

var V1, V2, S;

do {

var U1 = Math.random();

var U2 = Math.random();

V1 = 2 * U1 - 1;

V2 = 2 * U2 - 1;

S = V1 * V1 + V2 * V2;

} while (S > 1);

X = Math.sqrt(-2 * Math.log(S) / S) * V1;

// Y = Math.sqrt(-2 * Math.log(S) / S) * V2;

X = mean + Math.sqrt(variance) * X;

// Y = mean + Math.sqrt(variance) * Y ;

return X;

}

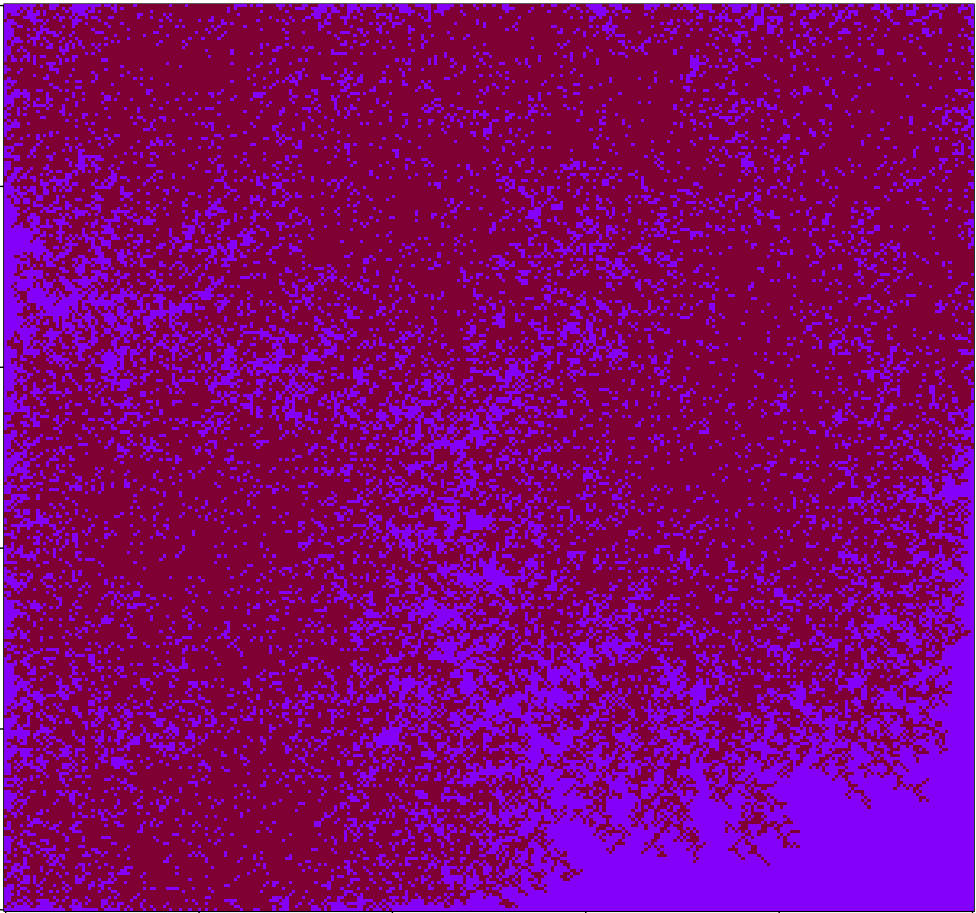

So how long did it take Yu-Jie’s function to run 10 million times and store the results into an array? In Chrome, it took about half-a-second, same as in R (in Firefox it took about 3 times as long). Got that? No speed difference between R and JS running in Chrome. For loops, JS seems blazing fast (compared to R). Take another look at the demo simulation I created. Each iteration of the code requires on the order of N-squared operations, and the entire display area is re-rendered from scratch. Try adding new balls using the “+” button and see if your browser keeps up.

It’s only a flesh wound!

So have I found the Holy Grail of computer languages for statistical computation? That’s much too strong a statement, especially given the crude state of JS libraries for even basic scientific needs like matrix operations. For now, R is safe. In the long term, though, I suspect the pressures to create easily shared, interactive interfaces, combined with improvements in speed, will push more people to JS/HTML5. Bridges like The Omega Project (has anyone used this?) might speed up the outflow, until people pour out of R and into JavaScript like blood from a butchered knight.